Video — Deep Dive: Quantizing Large Language Models

Quantization is an excellent technique to compress Large Language Models (LLM) and accelerate their inference.

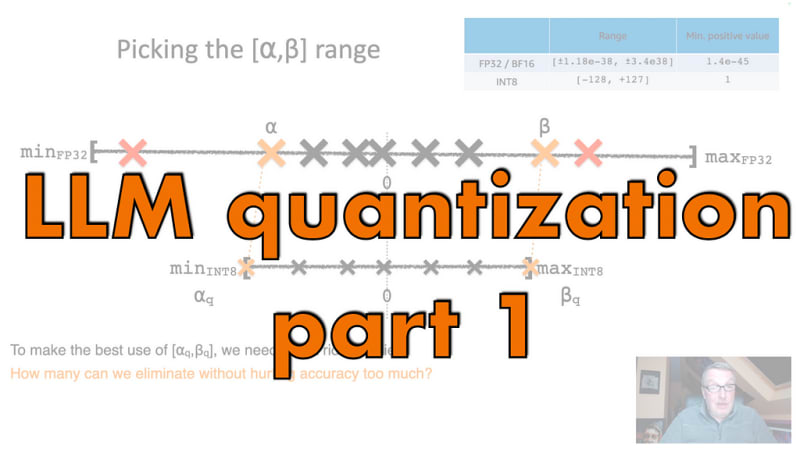

In this 2-part video, we discuss model quantization, first introducing what it is, and how to get an intuition of rescaling and the problems it creates. Then we introduce the different types of quantization: dynamic post-training quantization, static post-training quantization, and quantization-aware training. Finally, we look at and compare quantization techniques: PyTorch, ZeroQuant, bitsandbytes, SmoothQuant, GPTQ, AWQ, HQQ, and the Hugging Face Optimum Intel library 😎

Part 1:

Part 2: