It allows you to store files(object) in buckets( directories). Buckets are unique and have unique name across all regions although they are defined at the regional level.

The naming convention it maintains:

Object files have keys

Max Object size: 5TB

More than 5TB: Convert it to "Multi part Upload"

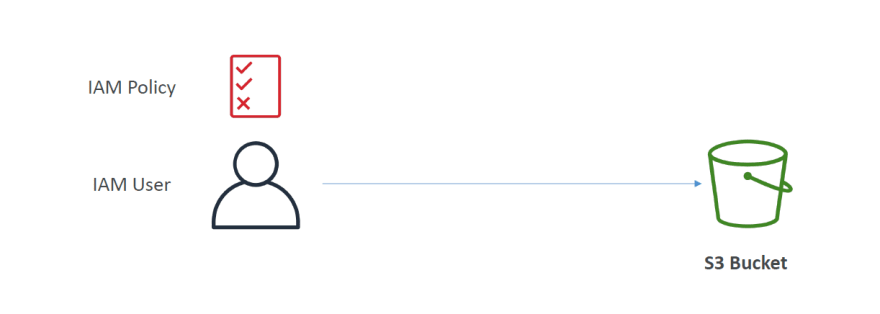

S3 Security_

For an anonymous person to access the bucket, we will set bucket policy

For an IAM user, we will update IAM policy.

For EC2 instances, we will first set EC2 Instance role and then set IAM policy.

For cross account IAM account, we will set bucket policy

Bucket Policies

We generally keep it "On" to keep our S3 private and not publicly available

Making websites Public

S3 Versioning

If you upload something, AWS automatically ads "null" tag as Version ID. now if you change something to that file and upload it with the same name, you might find the new file with "gfjakfjkfj..." Version ID. AWS Automatically ads this version id because previously for the same file AWS had "null" version and the new file thus have "gfjak...".If we delete the new file which has "gfjak.." version id, the file with "null" version id will be restored. Moreover, if we upload a file for the first time and delete it, AWS ads "delete marker" as version ID . So, later we can delete this file with "delete market" tag to restore it.

AWS Access Logs

To set one, we need to have 2 buckets. One bucket for normal usages and other to transfer the logs to. Assume that you have a bucket named "Karim-demo-2022" and in the bucket you have files named "index.md", "cofee.md" etc. Now create a bucket and name it as "Karim-access-logging-demo". Now go to "Karim-demo-2022" and go to properties and go to access loggings and edit it and enable the settings and then set the access logging bucket to "s3://Karim-access-logging-demo/logs" and save the changes. Now any change you make in "Karim-demo-2022" will be there within 1 hour.

S3 Replication

You can replicate your S3 across cross region (CRR) and within the same region (SRR). For this, you must enable versioning in source and destination. The S3 buckets can be in different accounts and you must give proper IAM permissions to S3.

Let's create a bucket named "replica-demo3737" and set the region far away from your mail bucket "Karim-demo-2022" . Don't forget to enable the versioning and press create. Now go to "Karim-demo-2022" and go to Management. Into the replication rule, create one. Set the replication rule name to "Demo-rule". Set the choose a rule scope to "Apply to all objects in the bucket". Under the destination choose the Bucket . Also under the IAM role, choose to create a new IAM role and Save.

You can also have existing objects in your replicated bucket. Now go to your bucket "Karim-demo-2022" and upload "Cofee.png" which you had once into bucket . This will add a version to this picture and

go to "replica-demo3737" and you can see the exact picture with an exact version number.

S3 Storage Class

Know more

Durability vs Availability

Read more

S3 Standard - General Purpose

S3 Infrequent Access

Amazon S3 Standard Infrequent Access (S3 Standard-IA)

99.9% Availability & Most used for Disaster Recovery, Backups

Amazon S3 One Zone Infrequent Access (S3 One Zone-IA)

High Durability (99.999999999%) in a single AZ but data may be lost when AZ is destroyed. 99.5% Availability. Mostly used in storing secondary backup copies of on premise data, or data one can recreate.

S3 Glacier Storage Classes

Low cost object storage which is meant for archiving or backup.Has to pay for storage and object retrieval cost.

Amazon S3 Glacier Instant Retrieval

Milisecond retrieval, great for data accessed once a quarter. Minimum 90days you need to keep your data._Amazon S3 Glacier Flexible Retrieval _

Expedited (1-5 minutes), Standard (3-5 hours), Bulk (5-12 hours)-free. Need to keep the data for minimum 90 days.Amazon S3 Glacier Deep Archive - for long term storage

Standard (12 hours), Bulk ( 48 hours) and lowest cost.

Amazon S3 Intelligent Tiering

Small monthly monitoring and auto tiering fee. Moves objects automatically between Access Tiers based on usage. There are no retrieval charges in S3 Intelligent Tiering.

frequent access tier (automatic): default tier.

infrequent access tier (automatic) : objects not accessed for 30 days

Archive instant access tier (automatic) : objects not accessed for 90 days

Archive access tier (optional) : configurable from 90 days to 700+ days

Deep archive access tier ( optional) : config from 180-700+ days

Now lets create a Bucket named "s3-storage-demo-2022" and then ulpoad a pic named "Cofee.png". While uploading, see the Properties,

We are choosing Standard-IA and Upload. You can also edit the storage class from properties and then go to storage class and edit.

Also, you can set rules for the whole bucket. Just go to Management and go to Lifecycle configuration and create one. Give it the name "DemoRule". Select "Apply to all objects in the bucket". Select 'Current version of objects between storage class" . Then which store class to be there after certain days

Move your objects between store classes

S3 Object Lock & Glacier Vault Lock

S3 Object Lock

- Adopt WORM( Write Once Read Many) Model & Block an object version from deletion :You can write once and ensure that no one is able to delete the object for an exact amount of time.

Glacier Vault Lock

Adopt WORM, Locks the policy for future edits, and helpful for compliance and data retention.

S3 Encryption

Your object can be encrypted. So, there are 3 models.